Companies discover the APAC problem the same way. Their app works well from a U.S. or European region until customers in China, Southeast Asia, or nearby financial centers start reporting slow dashboards, delayed checkouts, and inconsistent session performance.

Consequently, hong kong dedicated servers become a strategic tool rather than another hosting option. If your users sit across Asia and your workloads need predictable compute, cleaner routing, and tighter control than a shared cloud instance can provide, Hong Kong belongs on the shortlist.

It is not a perfect answer for every workload. Performance is excellent, but legal and data governance questions are more complex than many hosting guides admit. That trade-off matters. A good deployment plan treats Hong Kong as both an acceleration point and a jurisdiction that requires careful design.

Introduction Why Your Business Needs a Gateway to Asia

A pattern looks like this. An ecommerce brand launches in North America, adds customers across APAC, and keeps the same hosting footprint because the application “still works.” Then support tickets start arriving from users who can browse the catalog but feel lag during login, search, and checkout.

The same thing happens to SaaS platforms. Admin panels load fast for the home office, but APAC tenants see slow report generation, higher session friction, and more complaints during peak business hours. Teams try to patch over the issue with CDN caching alone, but dynamic traffic, authenticated requests, APIs, and database-heavy workflows still suffer.

Hong Kong dedicated servers solve the problem when the issue is not content delivery but application placement. Putting dedicated infrastructure closer to APAC demand gives you more control over routing, bandwidth, operating system tuning, storage layout, and security posture.

That matters for workloads such as:

- Customer-facing commerce with Magento, WooCommerce, or custom carts

- Private application stacks where root access and custom middleware are required

- Latency-sensitive APIs serving partners across Asia

- Virtualization nodes for teams building regional staging, failover, or private cloud capacity

Dedicated hosting in Hong Kong is useful when you need a front-end or regional application tier near users, but still want freedom to design the rest of the stack your own way.

If your APAC users have outgrown a distant region, start by evaluating whether a bare metal footprint in Hong Kong can remove the latency bottleneck at the application layer.

Hong Kong The Premier Digital Hub for APAC

Hong Kong has become a serious infrastructure location because it combines regional proximity with mature carrier and facility ecosystems. For many teams, that mix is more important than raw server specs.

The density of enterprise-grade facilities is one reason. According to Mordor Intelligence’s Hong Kong data center market analysis, large data center facilities hold 38.20% market share in 2025, Tseung Kwan O represents 36.50% of total market value in 2025, and Tier 3 facilities account for 64.70% revenue share in 2025. That combination points to a market shaped around enterprise-grade reliability, scale, and connectivity rather than small, fragmented hosting footprints.

Why location inside Hong Kong matters

Not all Hong Kong hosting is equal. Facilities in established data center zones benefit from stronger power design, denser carrier presence, and better operational maturity.

Tseung Kwan O stands out because the area has become a magnet for larger sites. For infrastructure buyers, that translates into better options for cross-connects, upstream diversity, and future expansion. If you are planning a dedicated fleet, hybrid architecture, or private cloud extension, a dense facility market reduces the odds of painting yourself into a corner later.

Teams that need carrier choice should also look closely at whether the facility is neutral and built for network flexibility. A useful benchmark is the model behind a carrier neutral datacenter approach, where interconnection options support routing strategy instead of locking you into a narrow transport design.

Why Tier 3 is the practical sweet spot

Tier 3 matters because most businesses need concurrently maintainable infrastructure, not the cost profile of the most extreme fault-tolerant build. In practice, that means maintenance can happen without taking your environment offline, assuming the rest of your architecture is also built correctly.

For dedicated servers, that is a strong middle ground:

- Reliable enough for production enterprise workloads

- More economical than overbuying for theoretical edge cases

- Well suited to mixed fleets that include web, database, caching, and virtualization nodes

Tip: If your application has a realistic tolerance for maintenance windows at the application layer, spend more attention on network design, backup architecture, and storage resilience than on chasing the most expensive facility tier by default.

Why Hong Kong fits regional expansion

Hong Kong works well when you need a regional gateway rather than a single-country deployment. It gives organizations a strong operating point for serving nearby markets while keeping infrastructure close to important commercial and connectivity corridors.

That is especially useful for:

| Use case | Why Hong Kong fits |

|---|---|

| Regional ecommerce | Faster dynamic application response for APAC shoppers |

| Financial platforms | Strong facility ecosystem and enterprise-oriented infrastructure |

| Developer platforms | Better placement for APIs, CI mirrors, and regional app nodes |

| Hybrid cloud | Good anchor point for front-end or edge application tiers |

The takeaway is simple. Hong Kong is not “another Asian location.” It is one of the few places where facility scale, network density, and enterprise operating models align cleanly for dedicated hosting.

Unlocking Performance with Low-Latency Connectivity

The main reason buyers look at Hong Kong is performance. Not synthetic benchmark performance. User-facing performance.

Premium facilities in Hong Kong show why. According to DataPacket’s Hong Kong data center profile, sites such as Equinix HK2 provide 290+ Tbps of network capacity and connectivity to 85+ networks, which supports offers with unmetered bandwidth up to 200 Gbps and average pings to Shanghai under 30ms, described as a 40-60% improvement over other regional hubs.

That kind of difference changes application behavior in ways users notice.

Where low latency pays off

For static content, a CDN can hide a lot. For live applications, it cannot hide everything.

A Hong Kong dedicated server helps most when requests must reach the origin for logic or data access:

- Checkout and cart flows where every extra round trip hurts conversion

- Authenticated SaaS sessions with frequent API calls

- Trading, quoting, and dashboard interfaces that feel broken when response times drift

- Gaming backends and real-time services that depend on stable routes

If your traffic profile includes a lot of cache misses, session writes, database reads, or authenticated API requests, server location matters.

What to ask providers beyond “How many Gbps?”

Bandwidth headlines are easy to market. Routing quality is harder and more important.

Ask practical questions such as:

- Is the uplink shared or unshared? Dedicated uplinks matter when traffic bursts are part of normal business.

- What network mix is available? Carrier diversity beats dependence on a single upstream.

- How is routing monitored? Real-time route adjustments and active monitoring are worth more than brochure language.

- Can the provider explain expected path quality to your target users? If they cannot describe likely routing behavior, they may not manage it closely.

A network designed for hong kong dedicated servers should make dynamic traffic predictable, not fast in a best-case test.

Key takeaway: Low latency is valuable only when the entire path is stable. A server with good CPU and storage still feels slow if the route is congested, lossy, or inconsistent.

Performance architecture that works

For production workloads, a clean pattern is:

- keep the application tier in Hong Kong for regional responsiveness

- place replication, backups, or sensitive data services wherever your governance model requires

- isolate noisy neighbors by choosing dedicated hardware rather than oversubscribed shared environments

If your use case includes large file delivery, streaming, or sustained transfer, review options such as an unmetered bandwidth dedicated server so throughput planning does not become the bottleneck after latency has been fixed.

The result is not “faster hosting” in a generic sense. It is smoother user interaction, fewer timeout complaints, and less operational noise around APAC performance.

Navigating Data Sovereignty and Compliance Challenges

A common failure pattern looks like this. A team chooses Hong Kong for speed, places the full application stack there, then asks legal and security to review the setup after customer data, logs, and admin workflows are already tied to that region.

That order creates avoidable risk.

Hong Kong remains a strong location for APAC delivery, but the compliance picture is no longer simple. According to Koddos’s discussion of Hong Kong dedicated server considerations, the 2020 National Security Law and 2025 ordinance expansions create compliance risks, including potential data handover requests and content monitoring, which makes managed security and jurisdictional strategy critical.

The practical question is not whether Hong Kong is good or bad. The question is which parts of the stack belong there, and which do not.

Start with data classification, not server procurement

Teams make one expensive mistake. They treat low latency as a reason to place every workload and every dataset in the same jurisdiction.

That is not the right design.

If the application handles customer records, identity data, internal messages, regulated files, or user-generated content, classify that data before anything is deployed. For many businesses, Hong Kong works best as a performance tier, not as the default home for every system of record.

This matters most for:

- SaaS vendors processing customer data across multiple jurisdictions

- Ecommerce operators storing payment-adjacent records, order histories, and support threads

- Media and community platforms exposed to content review and takedown pressure

- Professional services firms working under contract terms that limit data location or access

A practical architecture for balancing speed and control

The cleanest pattern is separation by sensitivity.

Use Hong Kong for components that benefit directly from proximity to users:

- web front ends

- reverse proxies

- CDN-adjacent application nodes

- caching layers

- read-heavy regional services

Keep higher-risk systems in a jurisdiction that better matches your governance requirements:

- primary customer databases

- key management systems

- long-term archives

- compliance log stores

- internal admin and reporting platforms

In this scenario, hybrid infrastructure earns its budget. You keep the user-facing workload close to APAC traffic, while limiting what sensitive data is stored or retained in Hong Kong. ARPHost’s managed services make that model easier to operate because the split between dedicated servers, security controls, and hybrid cloud resources can be planned as one deployment instead of stitched together later.

Controls that reduce exposure

Legal review sets the boundary. Infrastructure controls enforce it.

A workable checklist looks like this:

| Control area | Practical action |

|---|---|

| Data minimization | Store only the records the regional service needs to function |

| Segmentation | Separate edge, application, and data tiers so each has a clear purpose |

| Encryption | Encrypt data at rest and in transit, and keep key access tightly limited |

| Logging | Send audit logs to a lower-risk location when retention rules allow it |

| Access control | Restrict admin access, require role separation, and review privileged actions |

| Content governance | Define what content types can be hosted in the Hong Kong environment |

I give teams a simple rule. Put latency-sensitive services in Hong Kong. Keep legally sensitive data elsewhere unless there is a documented reason not to.

Compliance is now part of infrastructure design

This is an operating model issue, not a legal one.

Infrastructure teams need clear answers to a few questions before launch. What data enters the Hong Kong environment. What remains only in memory or cache. What is replicated out immediately. What logs stay local. Who has administrative access, from where, and under which approval process.

For a broader governance view, Navigating Compliance for Modern SaaS Teams is useful because it treats compliance as a day-to-day operating discipline rather than a paperwork task.

Teams considering their own hardware in a third-party facility should also review what colocation hosting means. Colocation gives more control over hardware and policy, but it also shifts more responsibility to your team for physical access processes, security tooling, patch discipline, and audit readiness.

The business case is straightforward. Hong Kong can be an excellent APAC performance tier. It should be used with a clear data placement policy, defined controls, and an architecture that separates speed from sovereignty.

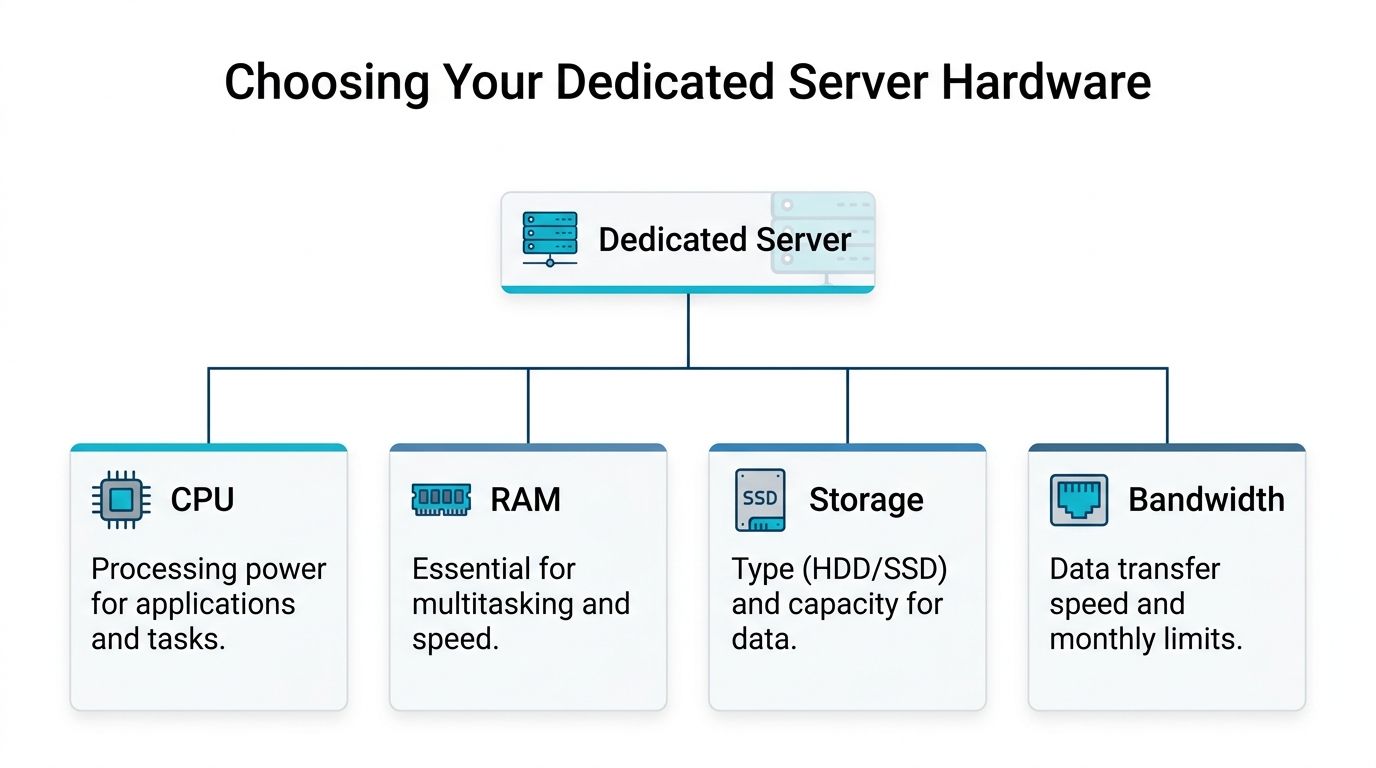

Choosing Your Server Hardware and Specifications

Once Hong Kong is the chosen region, the next mistake is buying hardware by headline instead of workload. That leads to one of two outcomes. Teams either overbuy expensive cores they never use, or underbuy storage and memory for applications that spend all day waiting on disk and cache pressure.

The market trend supports a rack-focused, scale-oriented buying model. According to Market Report Analytics on the Hong Kong data center server market, the market is projected to reach $700 million by 2028, with rack servers accounting for over 70% of the market. The same source notes that entry-level dedicated plans commonly start with Intel Xeon E3, 16GB RAM, 240GB SSD, and 100 Mbps international bandwidth, which is a workable baseline for SMB projects and development environments.

Start with the workload, not the catalog

Ask what the server is supposed to do.

A dedicated server for a single web application is not the same as a virtualization node, database host, or file-heavy content platform. The CPU profile, memory pressure, storage behavior, and bandwidth pattern differ enough that “general purpose” can mean “poorly matched.”

Use this framework:

- Web app node. Prioritize balanced CPU, enough RAM for caching, and SSD storage.

- Database-heavy workload. Prioritize fast storage and memory before chasing more cores.

- Virtualization host. Prioritize core count, RAM headroom, and storage consistency.

- Build or CI worker. Prioritize CPU scheduling and local disk responsiveness.

CPU choices that make sense

A lot of teams focus on processor branding first. That is backwards.

Choose CPU based on concurrency and application type:

- Lower to moderate concurrency web stacks run well on entry-level Xeon-class dedicated plans.

- Virtualization-heavy environments benefit from more cores and stronger memory capacity.

- Mixed service nodes need enough threads to prevent one noisy task from affecting everything else.

Do not buy a large CPU for an application that is blocked by database latency or poor query design. In many production environments, storage and memory tuning move the needle more than another jump in processor class.

RAM sizing is usually where underbuying hurts

Memory pressure creates the kind of slowness that users interpret as instability.

You need enough RAM for:

- the OS and service daemons

- application workers

- query cache or object cache

- burst traffic

- maintenance tasks like backups, indexing, and updates

For WordPress, Magento, Node services, or Java applications, memory headroom matters because background jobs and cache warmup compete with live requests. For Proxmox or KVM-based environments, RAM planning is even more important because overcommit decisions become an operational risk.

Storage is where performance becomes obvious

For transactional applications, NVMe should be the default starting point unless the workload is light. Slow storage can make a healthy server look broken.

This is true for:

- Magento catalogs with heavy database access

- ERP systems with frequent reads and writes

- API platforms with queue activity

- virtualization nodes running many guest disks

Key takeaway: If users complain that the site feels “slow everywhere,” check storage latency and memory pressure before blaming the CPU.

Bandwidth planning should match user behavior

Do not buy bandwidth based only on average transfer. Think about traffic shape.

Consider:

- bursty downloads

- media-heavy pages

- replication windows

- backup movement

- software distribution or package mirrors

A low-end plan may be enough for development, staging, or internal tools. Customer-facing production needs more room for peaks, particularly in APAC where regional traffic can concentrate sharply around business hours and promotions.

For organizations building private infrastructure rather than a single server, a Proxmox-based design can be more flexible than adding standalone boxes one at a time. That is true when you expect growth, migrations from VMware, or a mix of VMs and lightweight containers.

Managed Versus Unmanaged Servers A Practical Comparison

Buyers make the wrong financial decision here while thinking they are saving money.

An unmanaged dedicated server gives you full control. It also gives you full responsibility. If your team is strong in Linux administration, patching, backups, firewall policy, monitoring, and incident response, that can be the right choice. If not, unmanaged hosting tends to create hidden operating costs.

The hardware side can be excellent either way. According to HostColor’s Hong Kong dedicated server overview, high-end configurations can use Intel Xeon E5-2650v2 processors with 1.92TB NVMe SSDs and deliver over 500K IOPS. The same source notes that a managed service layer can add RAID-1 mirroring, improving fault tolerance and reducing data loss risk by 99.9%.

What unmanaged really means

Unmanaged is not “cheaper hosting.” It means your team owns tasks such as:

- operating system updates

- service hardening

- intrusion response

- backup validation

- disk health checks

- log review

- firewall changes

- performance troubleshooting

For a mature DevOps team, that may be normal. For a small business or lean engineering group, those jobs get deferred until something breaks.

Where managed service makes financial sense

Managed service is a better fit when uptime and internal focus matter more than doing every task in-house.

A practical comparison:

| Model | Works best for | Common risk |

|—|—|

| Unmanaged | Skilled infra teams that want complete OS-level control | Security and maintenance tasks slip during busy periods |

| Managed | SMBs, lean dev teams, and businesses without round-the-clock admins | Less freedom if you expect total DIY operations |

The primary cost question is not monthly price. It is whether your team should spend time fixing package conflicts, tuning backups, replacing failed components, and responding to alerts at odd hours.

Tip: If your application generates revenue directly, compare managed service cost against one serious outage, one restore event, or one delayed security patch. The math changes quickly.

A useful decision rule

Choose unmanaged if your team already has documented runbooks, on-call coverage, and the discipline to maintain production hosts properly.

Choose managed if your business needs dedicated performance but does not want infrastructure maintenance to become a second full-time job.

For many buyers of hong kong dedicated servers, the best compromise is full root access on dedicated hardware with provider-managed monitoring, backup strategy, storage resilience, and security operations. That keeps the application flexible while reducing operational drag.

Your Hong Kong Server Deployment Checklist

Buying the server is the easy part. Deploying it without creating new problems takes more discipline.

Finalize the role of the server

Do not start with “we need a server in Hong Kong.” Start with a precise role.

Is it:

- a production web node

- a regional API endpoint

- a database replica

- a Proxmox host

- a disaster recovery target

That answer determines security policy, storage design, and migration order.

Vet the provider like an operator

Before signing anything, verify the things that affect operations after launch.

Check:

- support responsiveness and escalation path

- remote hands availability

- hardware replacement process

- network transparency

- backup options

- whether managed support exists if your in-house plan changes

If you expect to colocate or expand later, confirm that the provider can support that evolution without forcing a redesign.

Plan the migration in layers

A clean migration happens in this order:

- Build the server with hardened base configuration

- Restore or deploy the application

- Sync data carefully based on workload sensitivity

- Run validation tests for app behavior, logging, and access controls

- Cut over traffic in a controlled window

- Monitor hard during the first production cycle

For virtualization projects, especially moves from VMware to Proxmox, test the converted workload before final cutover. Boot success does not guarantee production readiness.

Optimize after launch

The first deployment is seldom the final tuned state.

After cutover, review:

- application response behavior

- cache hit quality

- worker process sizing

- storage pressure

- log noise

- security alerts

- backup completion and restore testing

If the environment will host websites, admin tools such as Webuzo and instant application deployment can simplify operational overhead. If the environment will host VMs, document templates, backup schedules, and patch windows immediately so growth does not create chaos.

A good launch ends with a stable runbook, not just a live IP.

Frequently Asked Questions About Hong Kong Servers

Can I host content for mainland China without an ICP license

Sometimes, yes. The harder question is whether you should rely on Hong Kong as a long-term answer for that audience.

A Hong Kong deployment can serve users near mainland China without placing the workload inside the mainland licensing framework, but that does not remove legal review, content controls, or routing risk. For marketing sites and regional applications, this can be a practical compromise. For regulated content, media-heavy platforms, or services likely to draw scrutiny, legal counsel should review the design before launch.

What is the practical difference between premium China-oriented routing and standard transit

It shows up in user behavior and support load.

Premium China-focused routing usually delivers steadier paths into mainland networks, with fewer spikes in response time and fewer intermittent access complaints. Standard transit can still work well for broader APAC traffic, but performance is less consistent for sessions that depend on repeated requests, such as logins, admin panels, checkout flows, and API calls. If the business case depends on reliable user experience in mainland China, pay for the better routing. If the workload is mostly static content or a secondary regional service, standard transit may be enough.

Should I choose a single dedicated server or a private cloud design

Choose based on failure tolerance and operational model, not just price.

A single dedicated server fits stable application stacks, predictable resource use, and teams that want maximum performance per dollar. A private cloud design is usually better when workloads need VM separation, staged growth, snapshots, or easier movement from older virtualization platforms. In practice, many APAC deployments start on one strong dedicated node and shift to a hybrid or private cloud layout once compliance boundaries, backup policy, or service sprawl become harder to manage on one box.

Are hong kong dedicated servers a good fit for ecommerce

They can be, particularly for stores serving customers across East and Southeast Asia where application responsiveness affects conversion.

The strongest results come from keeping the transactional app tier close to users, using NVMe storage, and treating the database carefully. That said, low latency is only part of the decision. If the store handles sensitive customer records, payment-related systems, or data subject to strict residency rules, a split design is often smarter. Put the performance-sensitive front end and app services in Hong Kong, and keep the most sensitive systems in another jurisdiction or inside a managed hybrid environment.

How should I handle sensitive data in a Hong Kong deployment

Use data minimization by default.

Keep only the systems in Hong Kong that benefit directly from proximity to APAC users. Place key management, highly sensitive records, long-term archives, and certain regulated datasets where your legal and compliance team is more comfortable. Hong Kong is attractive because of connectivity and market access, but the National Security Law changes the risk discussion for some organizations. The right answer is seldom "put everything there" or "avoid it completely." It is usually a segmented architecture with clear data boundaries, documented access controls, and managed oversight so performance gains do not create unnecessary exposure.

If you need help choosing between bare metal, VPS, private cloud, colocation, or a managed hybrid design for APAC workloads, ARPHost, LLC offers U.S.-based infrastructure guidance, secure hosting options, Proxmox private clouds, and fully managed IT services. Explore VPS hosting, review secure web hosting bundles, compare managed services, or deploy dedicated infrastructure with bare metal servers.